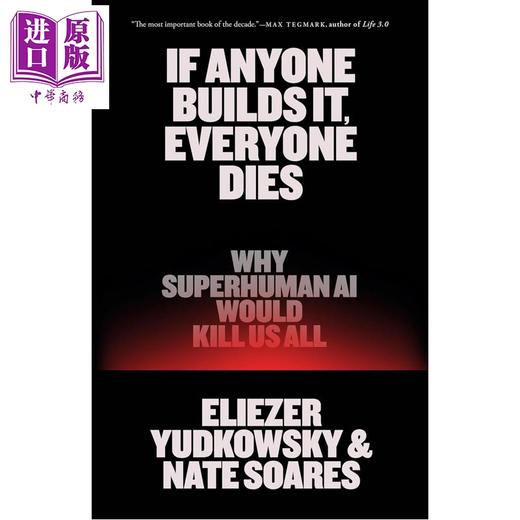

【中商原版】造极必殒 超智能AI会导致人类灭绝原因 If Anyone Builds It Everyone Dies 英文原版 Eliezer Yudkowsky

| 运费: | ¥ 5.00-30.00 |

| 库存: | 9 件 |

商品详情

造极必殒:为什么超智能AI会导致人类灭绝

If Anyone Builds It, Everyone Dies : Why Superhuman AI Would Kill Us All

基本信息

ISBN13: 9780316595643

ISBN10: 0316595640

Format: Hardcover

Pub. Date: 2025-09-16

Publisher(s): Little, Brown and Company

页面参数仅供参考,具体以实物为准

书籍简介

争相创造超人AI已将我们推向灭绝之路——但正如该领域两位早期研究人员在这封号召人类的公开信中所言,改变方向还为时不晚。

“或许会成为我们这个时代重要的著作。”——蒂姆·厄本,《Wait But Why》

2023年,数百位AI界的杰出人物签署了一封公开信,警告称人工智能对人类灭绝构成了严重威胁。自此之后,AI竞赛愈演愈烈。各大公司和国家都在竞相打造比人类更聪明的机器。而世界对接下来可能发生的事情却毫无准备。

几十年来,这封公开信的两位签署者——埃利泽·尤德科夫斯基和内特·苏亚雷斯——一直在研究超越人类的智能将如何思考、行为以及如何追求其目标。他们的研究表明,足够聪明的人工智能会发展出自己的目标,从而与我们发生冲突——一旦发生冲突,超级人工智能将会碾压我们。这场较量甚至不会有悬念。

超级机器智能如何能够毁灭我们整个物种?它为什么想要这样做?它真的想要什么吗?在这本紧迫的著作中,尤德科夫斯基和索雷斯深入探讨了相关理论和证据,提出了一种可能的灭绝情景,并解释了人类生存所需的条件。

全世界都在竞相建造某种全新的事物。而如果有人建造了它,所有人就会丧命。

“这是我读过的关于人工智能风险问题简洁、实用的解释。”——Reddit 前首席执行官黄逸山

The scramble to create superhuman AI has put us on the path to extinction—but it’s not too late to change course, as two of the field’s earliest researchers explain in this clarion call for humanity.

"May prove to be the most important book of our time.”—Tim Urban, Wait But Why

In 2023, hundreds of AI luminaries signed an open letter warning that artificial intelligence poses a serious risk of human extinction. Since then, the AI race has only intensified. Companies and countries are rushing to build machines that will be smarter than any person. And the world is devastatingly unprepared for what would come next.

For decades, two signatories of that letter—Eliezer Yudkowsky and Nate Soares—have studied how smarter-than-human intelligences will think, behave, and pursue their objectives. Their research says that sufficiently smart AIs will develop goals of their own that put them in conflict with us—and that if it comes to conflict, an artificial superintelligence would crush us. The contest wouldn’t even be close.

How could a machine superintelligence wipe out our entire species? Why would it want to? Would it want anything at all? In this urgent book, Yudkowsky and Soares walk through the theory and the evidence, present one possible extinction scenario, and explain what it would take for humanity to survive.

The world is racing to build something truly new under the sun. And if anyone builds it, everyone dies.

“The best no-nonsense, simple explanation of the AI risk problem I've ever read.”—Yishan Wong, Former CEO of Reddit

作者简介

Eliezer Yudkowsky 是人工智能对齐领域的创始人之一,在塑造公众关于“超越人类的人工智能”的讨论中发挥了重要作用。他入选了《时代》杂志 2023 年“人工智能领域具影响力的 100 人”榜单,也是《纽约时报》“现代人工智能运动黎明背后的人物”榜单中收录的 12 位公众人物之一,并曾在《纽约客》、《新闻周刊》、《福布斯》、《连线》、《彭博社》、《大西洋月刊》、《经济学人》、《华盛顿邮报》等众多媒体上发表过文章或接受过采访。

Eliezer Yudkowsky is a founding researcher of the field of AI alignment and played a major role in shaping the public conversation about smarter-than-human AI. He appeared on Time magazine's 2023 list of the 100 Most Influential People In AI, was one of the twelve public figures featured in The New York Times's "Who's Who Behind the Dawn of the Modern Artificial Intelligence Movement," and has been discussed or interviewed in The New Yorker, Newsweek, Forbes, Wired, Bloomberg, The Atlantic, The Economist, The Washington Post, and many other venues.

- 中商进口商城 (微信公众号认证)

- 中商进口商城中华商务贸易有限公司所运营的英美日韩港台原版图书销售平台,旨在向内地读者介绍、普及、引进最新最有价值的国外和港台图书和资讯。

- 扫描二维码,访问我们的微信店铺

- 随时随地的购物、客服咨询、查询订单和物流...