【中商原版】计算学习理论导论 An Introduction to Computational Learning Theory 英文原版 Michael J Kearns

| 运费: | ¥ 0.00-16.00 |

| 库存: | 3 件 |

商品详情

An Introduction to Computational Learning Theory

基本信息

Series:The MIT Press

Format:Hardback 222 pages

Publisher:MIT Press Ltd

Imprint:MIT Press

ISBN:9780262111935

Published:15 Aug 1994

Weight:586g

Dimensions:187 x 237 x 18 (mm)

页面参数仅供参考,具体以实物为准

书籍简介

在强调计算效率问题的同时,Michael Kearns和Umesh Vazirani为人工智能、神经网络、理论计算机科学和统计学领域的研究人员和学生介绍了计算学习理论中的一些中心议题。计算学习理论是一个新的和迅速扩展的研究领域,它研究归纳的形式模型,目的是发现高效学习算法的共同方法,并确定学习的计算障碍。本书中的每一个主题都被选择来阐明一个一般的原则,并在一个精确的形式环境中进行探讨。本书强调直觉,以使非理论工作者也能理解这些材料,同时也为专家提供精确的论据。这种平衡是既定定理的新证明和标准证明的新介绍的结果。涵盖的主题包括广泛研究动机、定义和基本结果,包括正面和反面,L.G. Valiant的PAC学习;奥卡姆剃刀,它正式确定了学习和数据压缩之间的关系;Vapnik-Chervonenkis维度;弱学习和强学习的等价性;通过统计查询的方法在有噪声的情况下的有效学习;学习和密码学之间的关系,以及由此产生的对有效学习的计算限制;学习问题之间的可还原性;以及从主动实验中学习有限自动机的算法。

Emphasizing issues of computational efficiency, Michael Kearns and Umesh Vazirani introduce a number of central topics in computational learning theory for researchers and students in artificial intelligence, neural networks, theoretical computer science, and statistics.

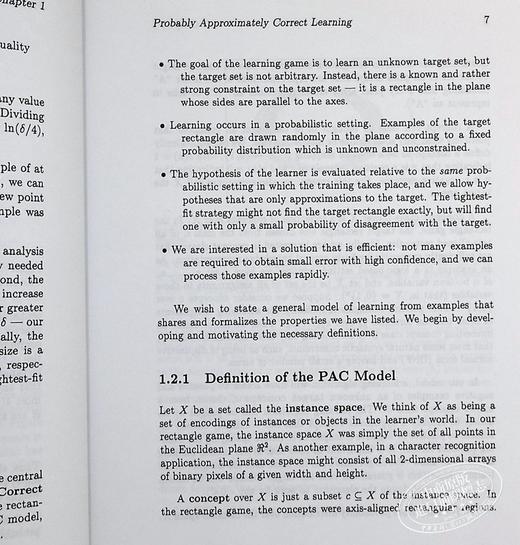

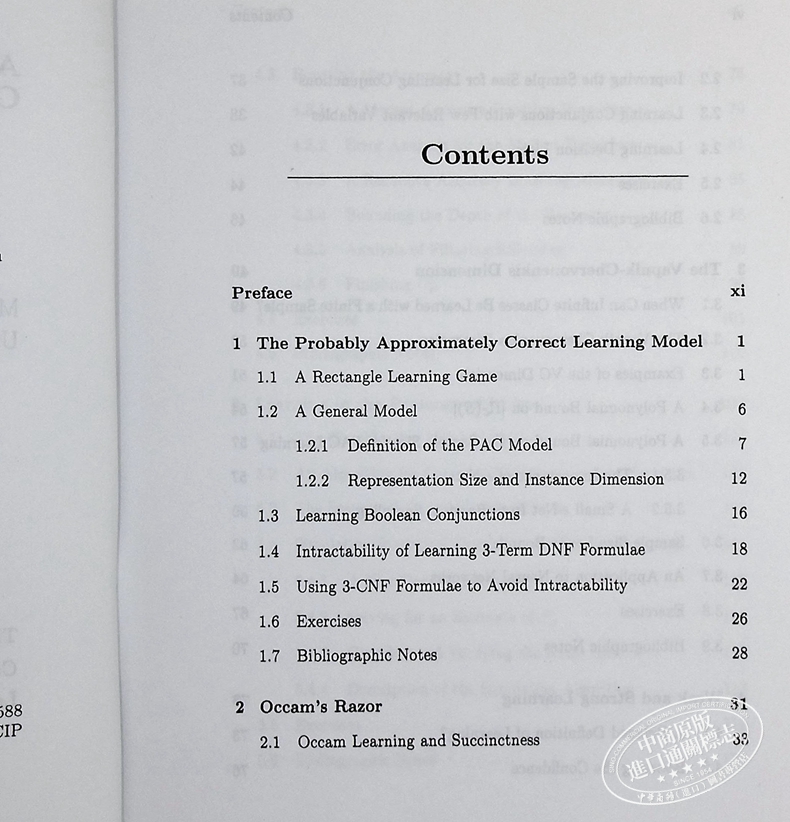

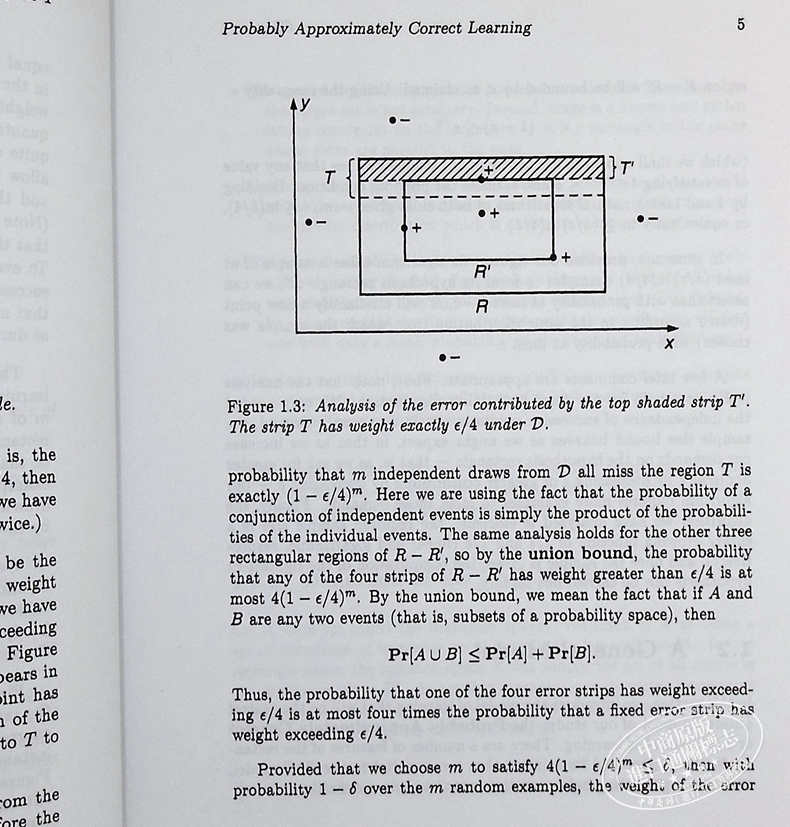

Emphasizing issues of computational efficiency, Michael Kearns and Umesh Vazirani introduce a number of central topics in computational learning theory for researchers and students in artificial intelligence, neural networks, theoretical computer science, and statistics. Computational learning theory is a new and rapidly expanding area of research that examines formal models of induction with the goals of discovering the common methods underlying efficient learning algorithms and identifying the computational impediments to learning. Each topic in the book has been chosen to elucidate a general principle, which is explored in a precise formal setting. Intuition has been emphasized in the presentation to make the material accessible to the nontheoretician while still providing precise arguments for the specialist. This balance is the result of new proofs of established theorems, and new presentations of the standard proofs. The topics covered include the motivation, definitions, and fundamental results, both positive and negative, for the widely studied L. G. Valiant model of Probably Approximately Correct Learning; Occam's Razor, which formalizes a relationship between learning and data compression; the Vapnik-Chervonenkis dimension; the equivalence of weak and strong learning; efficient learning in the presence of noise by the method of statistical queries; relationships between learning and cryptography, and the resulting computational limitations on efficient learning; reducibility between learning problems; and algorithms for learning finite automata from active experimentation.

作者简介

迈克尔·J·科恩斯是宾夕法尼亚大学的计算机和信息科学教授。

Michael J. Kearns is Professor of Computer and Information Science at the University of Pennsylvania.

乌梅什·瓦兹拉尼是加州大学伯克利分校电子工程和计算机科学系的Roger A. Strauch教授。

Umesh Vazirani is Roger A. Strauch Professor in the Electrical Engineering and Computer Sciences Department at the University of California, Berkeley.

- 中商进口商城 (微信公众号认证)

- 中商进口商城中华商务贸易有限公司所运营的英美日韩港台原版图书销售平台,旨在向内地读者介绍、普及、引进最新最有价值的国外和港台图书和资讯。

- 扫描二维码,访问我们的微信店铺

- 随时随地的购物、客服咨询、查询订单和物流...